Hi everyone!

I’m finally an official Unity Certified Developer! I just returned from the Unite LA conference and a short vacation, and I thought I’d share some of my highlights with you. I decided to go to Unite LA for multiple reasons: I really wanted to be a Unity Certified Developer, going to LA would allow me to meet face to face with one of my best clients, and I was hoping to meet some like-minded developers. Mission accomplished on all fronts! Plus my wife and I were able to have some time to explore LA.

This was my first time going to a Unity conference. It was actually the first time going to any professional conference where I was truly interested in the subject matter. And for the most part it was quite interesting. The biggest highlight for me was actually the Keynote. The heads of Unity came out to discuss the many MANY improvements coming in the near future (some already available in experimental betas). They’ll be improving the navigation system and we’ll finally be able to have our NavMesh Agents walk up walls! They’re improving the import pipeline so we don’t have to wait forever to re-import when switching platforms. Lighting build pipelines will also be improved so that baking occurs on important (visible) areas first and layers on complexity without having to wait for the unknown bake time (which will actually be more of a % done soon). They also talked a lot about threaded jobs. The engine will be taking care of a lot of this and they showed a really impressive demonstration: First they started off with the current engine and showed a school of 2,000 fish swimming around the scene (fleeing from a shark). This was rendering at about 30 fps. They then bumped up the number of fish to 20,000 and it was probably less than 10 fps until they turned on the new job system. Then BLAM! it was running at a solid 60+ fps with 20,000 fish on screen all swimming in various fashions. Pretty hefty improvements. Everyone was quite amazed. I know it will help some of the performance issues we’re having in larger scenes of Vuzop where we’re moving 1,000+ objects and activating 100s of particle systems. I can hardly wait.

This was my first time going to a Unity conference. It was actually the first time going to any professional conference where I was truly interested in the subject matter. And for the most part it was quite interesting. The biggest highlight for me was actually the Keynote. The heads of Unity came out to discuss the many MANY improvements coming in the near future (some already available in experimental betas). They’ll be improving the navigation system and we’ll finally be able to have our NavMesh Agents walk up walls! They’re improving the import pipeline so we don’t have to wait forever to re-import when switching platforms. Lighting build pipelines will also be improved so that baking occurs on important (visible) areas first and layers on complexity without having to wait for the unknown bake time (which will actually be more of a % done soon). They also talked a lot about threaded jobs. The engine will be taking care of a lot of this and they showed a really impressive demonstration: First they started off with the current engine and showed a school of 2,000 fish swimming around the scene (fleeing from a shark). This was rendering at about 30 fps. They then bumped up the number of fish to 20,000 and it was probably less than 10 fps until they turned on the new job system. Then BLAM! it was running at a solid 60+ fps with 20,000 fish on screen all swimming in various fashions. Pretty hefty improvements. Everyone was quite amazed. I know it will help some of the performance issues we’re having in larger scenes of Vuzop where we’re moving 1,000+ objects and activating 100s of particle systems. I can hardly wait.

Some other notable announcements from the keynote were:

- Unity Collaborate is now in open beta: If you’re not aware, Collaborate allows multiple people to work on the same project simultaneously and immediately sync changes. I’ve not yet used this, but I’m interested in seeing how this will work alongside version control systems like git.

- Unity Connect is now in open beta: This platform is for finding employees and work. It’s pretty nifty and emphasizes portfolio content. I hope it will work as well as the forums as I have gotten a lot of my work from there.

- GPU instancing (5.5 and 5.6): We should see some huge improvements to performance due to this. It’ll all be done automatically by the engine, but high-level API will exist for those who want to tweak the system.

- Video (5.6): Finally! MovieTexture will be deprecated in favor of a completely new cross-platform system. Sorry to those plugin developers who’ve worked hard over the years to fill this gap, but I’m very happy Unity is finally addressing this shortcoming.

- Timeline (unknown release): This is a really cool new tool. It is a new system where you can orchestrate multiple animations. Perfect for cutscenes. Unfortunately, I didn’t get a good picture to show, it basically looks like a typical dope sheet, but you can have multiple actors/tracks. Very cool!

- WebVR (5.6 – preview): Unity is working to bring WebVR into the engine. Hopefully this will be a viable alternative that will actually work on multiple VR devices. It’s hard to tell at this point, but hopes are high!

- Unity Best Practices: https://unity3d.com/learn/tutorials/topics/best-practices Finally! We have some official tutorials and guides on best practices. There’s not a ton of stuff there yet, but Unity plans to grow this section to help guide us on best practices. Hopefully this will reduce a lot of guessing and performance testing for many of us.

You can see the full roadmap at https://unity3d.com/unity/roadmap. RSS it to Feedly and don’t miss anything!

Next on my list of notable talks was “Test Guided Development” by Michael Starks (https://github.com/mstarks). Michael talked about how Test Driven Development has many shortcomings when building games (I actually think it totally slows up the process) and he suggests a newer idea: Test Guided Development. With Unity there’s little reason to test many systems since the engine already handles it well (and you couldn’t fix it if you tried – just use it right!). So we focus on what can be tested and don’t sweat the small stuff. Check out the talk and sample project at the above GitHub link. It’s a nice example of how to use Unity’s test tools and something we should all practice since it will save a lot of headache as our games/apps grow.

Another really cool talk about code was “Static Code Analysis” by Vinny DaSilva (https://github.com/vad710/UnityEngineAnalyzer). Vinny and co. have taken the Roslyn Code Analyzer and began applying it to Unity’s API. What this does is it scans your code for potential issues or performance bottlenecks. It’s really cool and I’m hoping to contribute to this project to tie the interface directly into the Unity Editor instead of viewing the results in a terminal or web browser. Be sure to check this project out on GitHub and contribute to it (even if asking for features or reporting issues). This should help us all to write better code!

Here are some cool tools and 3rd party features showcased:

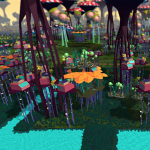

Mantle: This is a 3D environment building tool that can hook in to mapping data like Google Maps and you can procedurally create environments. It was really neat to watch them transform LA into a weird land with mushrooms and surreal plants. All on the fly. Check it out here: http://www.mantle.tech/

Mantle: This is a 3D environment building tool that can hook in to mapping data like Google Maps and you can procedurally create environments. It was really neat to watch them transform LA into a weird land with mushrooms and surreal plants. All on the fly. Check it out here: http://www.mantle.tech/- NVIDIA VRWorks: NVIDIA has announced that they are working to bring their VRWorks and GameWorks libraries to Unity. One really cool thing with this is they are improving the VR rendering pipeline so that it renders what is outside the peripheral view with a low (blurred) pixel density. This saves a lot of rendering time since we can’t really focus our eyes on those areas. It has a lot of other cool features like single pass stereo which will speed the rendering processes a lot. There’s even a really neat screen/video grabber in the API for full 360 shots. Check it out here: https://developer.nvidia.com/nvidia-vrworks-and-unity

- NeoFur (https://www.neoglyphic.com/neofur): Those of you needing a Fur solution, this is pretty awesome. It’s really great looking fur/soft body surfaces. AND it’s 30% off for Unity until the end of November. So if you’re planning a game that will need fur, I’d suggest grabbing this!

I was torn on what talks to attend. They all seemed really cool. Some excellent games to check out are: Project Wight and Fire Watch. You can see a full list of award winners here: https://unity3d.com/awards/2016/winners. Project Wight looks really cool – I love being able to play the “monster” in a game and this game will provide a very different perspective than any first-person perspective game I’ve seen. One thing that impressed me about this was that the “finishing” moves are not cutscene-like moves like how Bethesda did in the new Doom, but they are full animations that can will end up looking different every time.

There was a lot of focus on VR/AR development. Unity’s integrating VR into the Editor so you can do level design with a headset on. Really nifty. There was a presentation by Microsoft for the Hololens and it was neat, but overall unimpressive. They showed the placement of a virtual cube (which was quite shaky) and then putting a “handprint” on it (which didn’t work very well either). I guess they were trying to illustrate how easy it is to do some really neat things very easily with Unity, but I would have preferred if the presentation was more polished. Plus the low FOV (22 degrees) and the high price of the hardware just kind of ruins it. On the opposite end of the spectrum, we got to see the Google Daydream headset. It’s lightweight and really comfortable and is only $80 (with a controller). Since it uses Android device you can also do AR with it (as long as you have a rear-facing camera). MS needs to step up their game.

The venue and the event itself were quite awesome. We were at Loews Hotel on Hollywood Blvd which supplied a lot of things to do and places to eat within walking distance. The free food was pretty good and they offered plenty of drinks and snacks. We even had a party at Universal Studios with an open bar and free Panda Express (which was actually quite decent – that’s a big compliment from me because I think Panda Express is pretty awful). They even gave us unlimited access to the Transformers ride, Jurassic Park 3D, and King Kong. I only went on the Transformers ride since I’m old and hurt my back before the trip, but it was fun. They also had Optimus Prime posing for photos. He stole the show!

All in all I had a lot of fun, learned about a lot of useful and neat projects, got to finally meet a great client face-to-face, and got to make some new friends. It was a great experience and I’d highly recommend it to anyone interested in Unity or even just game development (there were a few hobbyists there too!).

If you’re looking to get certified as a Unity Developer and need some help studying, shoot me a message! I provide one-on-one tutoring and can coach you through it from direct, personal experience.

Oh yeah, and here’s the cool swag I got for getting certified and attending the conference!

As always, thanks for reading and don’t forget to subscribe to my email list to hear about new blog posts!

![]()